In search of the mystery of the cortical column and human-like general intelligence

Today, Astera Institute announced that I am leading its AGI research program with an ambitious research agenda. It’s backed by $1B+ in long-term financial support for building NeuroAI that learns, thinks, and plans efficiently like the human brain. Joining me on this quest as a lead researcher is my friend and collaborator Miguel Lázaro-Gredilla, who was at Google DeepMind before this. This blog post is written by both of us to outline our goals and the broad themes we will be pursuing.

We are motivated by two interconnected goals:

Understanding the human brain.

Building human-like general intelligence from first principles: data-efficient, robust, and able to learn from experience.

We believe that considering both these problems as part of the same puzzle is important to make progress on each, a vision that is shared by Astera Institute founders Jed and Seemay. At Astera AI, we are creating a unique AGI lab to tackle the fundamental problems of intelligence, working alongside Doris Tsao’s Astera Neuro to close the loop with neuroscience.

We were drawn to Astera for the next phase of our work because Astera recognizes that reverse engineering the brain is one of the most challenging research problems, requiring bold bets, navigating blind alleys, making mistakes, and recovering from them. With a longer timeline that is fully funded, we can focus on the difficult fundamentals instead of being distracted by short-term commercialization pressures or vibes. We can explore directions that are important but currently unappreciated. Having neuroscience experiments under the same roof as AI will help us unlock unexpected mysteries that will help us understand both AI and the brain.

One of the potential trade-offs we considered as we explored a home for this work is that non-profits often don’t allow for the ambitious, nimble pace that we would need to make progress. At Astera, we’ve been given the explicit responsibility of running our team like a start-up but with the advantages of philanthropy. We’re excited to bring our perspectives to this experiment and to recruit likeminded talent.

In the rest of this post we will describe some of the research themes we will be emphasizing. This is not meant to be comprehensive, but rather a starting point. As we progress, we remain committed to evolving our perspective and adapting our priorities based on new evidence, and we look forward to maintaining an active, transparent dialogue with the research community.

What are the general principles of human-like intelligence?

We look to the brain for hints on inductive biases and algorithms: What is the “Goldilocks” set of architectural and algorithmic properties that makes human learning data-efficient, planning-compatible, generally applicable across a variety of domains, and able to handle lifelong contexts? The uniformity of the cortical column and its preservation across mammals suggest that such general principles exist, and can be understood from studying the systems involving the cortex, thalamus, hippocampus, and the limbic system.

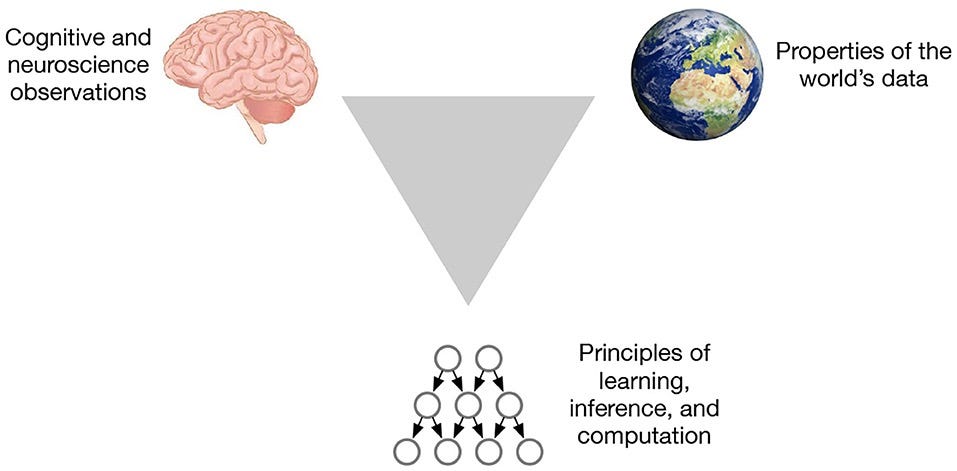

How do you tease apart the general principles from all the messy details of the brain? We will do that by triangulating on these principles using 1) observations from neuroscience; 2) properties of the world; and 3) computational/algorithmic models. A property of the brain is likely to be a general principle only when we can explain why it exists based on algorithmic ideas and the organization of the world.

What does this triangulation look like in practice? Below, we outline the specific research themes that emerge from this approach, starting with how we view the acquisition of knowledge itself. You might notice us suddenly changing our vocabulary from latent variables to cortical circuits; that’s just a reflection of how we think about these problems from multiple perspectives.

Learning from experience, not accumulated human knowledge.

The written knowledge current AIs learn from was acquired by humans over centuries through the process of interacting, tool-building, and experimenting in the world. We want AIs that learn and create knowledge from experience in the world, not just from knowledge that humans have already accumulated.

Learning fundamentally different world models

While today’s world models produce visually impressive full-frame videos, predicting pixel-level details of the next frame is not useful for long-term planning or reasoning. Instead, we will focus on world models with the following properties:

Hierarchical latent variables: Hierarchical latent variable models that can be queried in flexible ways will be required for mental simulation and situated commonsense reasoning.

Causally structured and compositional: We want our models to be able to learn the causal relationships in the world, which is necessary to drive the counterfactual reasoning humans exhibit, not just predict the next frame or token.

Reasoning is everywhere: Models should be able to reason without language tokens.

Modern LLMs have demonstrated the utility of inference-time compute for reasoning. However, currently, these reasoning computations are limited to language tokens. We believe that, to achieve human-like intelligence, reasoning should be a distributed process that needs to happen in all the components of a cognitive architecture that includes visual and motor systems, concept systems, and episodic memory. AI agents should have the ability for active inference, whereby they can act to gather more information to reduce uncertainty, and counterfactual reasoning, where they can mentally simulate alternative worlds.

Episodic memory and continual learning as an integral part of world models and reasoning

Episodic memory is the record of your experience of the world, as interpreted through your current world model. World models should offer a scaffolding to interpret this experience and to anchor those memories. The memories are then used for reasoning in combination with the world model. When you think about where you might have left your keys, you are using a combination of your world model and the episodic memory of places where you have been. These episodic memories are then used to update your world models, resulting in continual learning.

Neuroscience for AI

Neuroscience and cognitive science offer important insights into how the disparate-looking requirements about latent variables, causality, memory, planning, etc. are brought together into a coherent system that is elegant and efficient. Here we highlight a few of the neuroscience observations that guide our research.

Feedback/recurrent connections: Most AI systems work in a pure feed-forward manner, whereas our brains are highly recurrent. Feedback connections between cortical columns could be part of test-time inference computations.

Dynamics of reasoning: Cortical and hippocampal dynamics—the feed-forward, feedback, and lateral information flow—offer important clues about the speed and nature of inference computations. In the hippocampus these are seen in different forms of replay and sharp wave ripples.

Top-down attention control & binding: The ability to pay top-down attention to objects and features seems to be an inherent property of the cortical hierarchy. Moreover, the cortex also seems to have a unity of perception where it can bind together and track the different properties of an object. The interactions between the cortex, thalamus, and hippocampus hold important clues about the nature of these computations.

Schemas and structure-based transfer: The ability to extract abstract knowledge—schemas—in the form of flexible graphs might be an important component of human data efficiency and generalization. Modern neuroscience experiments are shedding light on how our brains learn schemas and utilize them for behavior.

Local synaptic plasticity rules and dendritic computations: Brain networks adapt via local synaptic plasticity and dendritic computations. We are rapidly gaining a wealth of empirical data on local plasticity mechanisms that operate at different timescales to self-organize decentralized components into circuits capable of executing complex algorithmic tasks.

Getting consciousness out of mysticism

Our subjective experience of consciousness is one of the most mysterious things our brains create. Is this an illusion? Can artificial agents have it? Can it be turned on or off, or adjusted in degrees? Does it have a performance implication? While several theories exist that address these questions partially, we think a significant amount of further research is needed to address these questions satisfactorily. We are keenly interested in this topic and hope to provide insights through tight iterations between theory, model-building, and experiments, something Astera Institute is uniquely positioned for.

Closing the loop between AI and neuroscience

Theory-building and understanding is never a purely bottom-up or purely top-down process. It requires combining both directions, and iterating. Advances in neuro-tech gives us unprecedented levels of access to the brain—fine-grained connectivity maps, and real-time recordings from thousands of individual neurons. Closing the loop between AI and neuroscience will involve judicious, clever experiments that target specific gaps in our understanding, using the data not just to map the brain, but to test and refine the underlying “algorithms” of intelligence.

On the AI side it will involve addressing the real challenges to our theories that arise from animal and human performance, and utilizing the representational and algorithmic insights that neuroscience experiments generate. We believe the shortcomings of current AI approaches are becoming increasingly apparent to those paying close attention. In contrast, AI approaches based on neuroscience insights are likely to be power-efficient, elegant, scalable, and controllable.

We are tremendously excited about the possibility of a true two-way scientific engine between AI and neuroscience—where better brain experiments produce better computational theories, and better computational theories produce sharper, more revealing brain experiments. If you are excited by this vision, join us!

Dileep George & Miguel Lázaro-Gredilla

Really fascinating Dileep! We should figure out why nature double clicked on cortical circuits. I was listening to Steven Byrnes on doom debates. That approach to ai safety is also very interesting.

Congratulations, Dileep! This is exciting news.